redo

This commit is contained in:

parent

3c30321175

commit

44ba435716

|

|

@ -1,30 +0,0 @@

|

|||

/up.conf

|

||||

/unifi-poller

|

||||

/unifi-poller*.gz

|

||||

/unifi-poller*.zip

|

||||

/unifi-poller*.1

|

||||

/unifi-poller*.deb

|

||||

/unifi-poller*.rpm

|

||||

/unifi-poller.*.arm

|

||||

/unifi-poller.exe

|

||||

/unifi-poller.*.macos

|

||||

/unifi-poller.*.linux

|

||||

/unifi-poller.rb

|

||||

*.sha256

|

||||

/vendor

|

||||

.DS_Store

|

||||

*~

|

||||

/package_build_*

|

||||

/release

|

||||

MANUAL

|

||||

MANUAL.html

|

||||

README

|

||||

README.html

|

||||

/unifi-poller_manual.html

|

||||

/homebrew_release_repo

|

||||

/.metadata.make

|

||||

bitly_token

|

||||

github_deploy_key

|

||||

gpg.signing.key

|

||||

.secret-files.tar

|

||||

*.so

|

||||

|

|

@ -1,44 +0,0 @@

|

|||

# Each line must have an export clause.

|

||||

# This file is parsed and sourced by the Makefile, Docker and Homebrew builds.

|

||||

# Powered by Application Builder: https://github.com/golift/application-builder

|

||||

|

||||

# Must match the repo name.

|

||||

BINARY="unifi-poller"

|

||||

# github username

|

||||

GHUSER="davidnewhall"

|

||||

# Github repo containing homebrew formula repo.

|

||||

HBREPO="golift/homebrew-mugs"

|

||||

MAINT="David Newhall II <david at sleepers dot pro>"

|

||||

VENDOR="Go Lift <code at golift dot io>"

|

||||

DESC="Polls a UniFi controller, exports metrics to InfluxDB and Prometheus"

|

||||

GOLANGCI_LINT_ARGS="--enable-all -D gochecknoglobals -D funlen -e G402 -D gochecknoinits"

|

||||

# Example must exist at examples/$CONFIG_FILE.example

|

||||

CONFIG_FILE="up.conf"

|

||||

LICENSE="MIT"

|

||||

# FORMULA is either 'service' or 'tool'. Services run as a daemon, tools do not.

|

||||

# This affects the homebrew formula (launchd) and linux packages (systemd).

|

||||

FORMULA="service"

|

||||

|

||||

export BINARY GHUSER HBREPO MAINT VENDOR DESC GOLANGCI_LINT_ARGS CONFIG_FILE LICENSE FORMULA

|

||||

|

||||

# The rest is mostly automatic.

|

||||

# Fix the repo if it doesn't match the binary name.

|

||||

# Provide a better URL if one exists.

|

||||

|

||||

# Used for source links and wiki links.

|

||||

SOURCE_URL="https://github.com/${GHUSER}/${BINARY}"

|

||||

# Used for documentation links.

|

||||

URL="${SOURCE_URL}"

|

||||

|

||||

# Dynamic. Recommend not changing.

|

||||

VVERSION=$(git describe --abbrev=0 --tags $(git rev-list --tags --max-count=1))

|

||||

VERSION="$(echo $VVERSION | tr -d v | grep -E '^\S+$' || echo development)"

|

||||

# This produces a 0 in some envirnoments (like Homebrew), but it's only used for packages.

|

||||

ITERATION=$(git rev-list --count --all || echo 0)

|

||||

DATE="$(date -u +%Y-%m-%dT%H:%M:%SZ)"

|

||||

COMMIT="$(git rev-parse --short HEAD || echo 0)"

|

||||

|

||||

# This is a custom download path for homebrew formula.

|

||||

SOURCE_PATH=https://golift.io/${BINARY}/archive/v${VERSION}.tar.gz

|

||||

|

||||

export SOURCE_URL URL VVERSION VERSION ITERATION DATE COMMIT SOURCE_PATH

|

||||

Binary file not shown.

|

|

@ -1,84 +0,0 @@

|

|||

# Powered by Application Builder: https://github.com/golift/application-builder

|

||||

language: go

|

||||

git:

|

||||

depth: false

|

||||

addons:

|

||||

apt:

|

||||

packages:

|

||||

- ruby-dev

|

||||

- rpm

|

||||

- build-essential

|

||||

- git

|

||||

- libgnome-keyring-dev

|

||||

- fakeroot

|

||||

- zip

|

||||

- debsigs

|

||||

- gnupg

|

||||

- expect

|

||||

go:

|

||||

- 1.13.x

|

||||

services:

|

||||

- docker

|

||||

install:

|

||||

- mkdir -p $GOPATH/bin

|

||||

# Download the `dep` binary to bin folder in $GOPATH

|

||||

- curl -sLo $GOPATH/bin/dep https://github.com/golang/dep/releases/download/v0.5.3/dep-linux-amd64

|

||||

- chmod +x $GOPATH/bin/dep

|

||||

# download super-linter: golangci-lint

|

||||

- curl -sL https://install.goreleaser.com/github.com/golangci/golangci-lint.sh | sh -s -- -b $(go env GOPATH)/bin latest

|

||||

- rvm install 2.0.0

|

||||

- rvm 2.0.0 do gem install --no-document fpm

|

||||

before_script:

|

||||

- gpg --import gpg.public.key

|

||||

# Create your own deploy key, tar it, and encrypt the file to make this work. Optionally add a bitly_token file to the archive.

|

||||

- openssl aes-256-cbc -K $encrypted_9f3147001275_key -iv $encrypted_9f3147001275_iv -in .secret-files.tar.enc -out .secret-files.tar -d

|

||||

- tar -xf .secret-files.tar

|

||||

- gpg --import gpg.signing.key

|

||||

- rm -f gpg.signing.key .secret-files.tar

|

||||

- source .metadata.sh

|

||||

- make vendor

|

||||

script:

|

||||

# Test Go and Docker.

|

||||

- make test

|

||||

- make docker

|

||||

# Test built docker image.

|

||||

- docker run $BINARY -v 2>&1 | grep -Eq "^$BINARY v$VERSION"

|

||||

# Build everything

|

||||

- rvm 2.0.0 do make release

|

||||

after_success:

|

||||

# Display Release Folder

|

||||

- ls -l release/

|

||||

# Setup the ssh client so we can clone and push to the homebrew formula repo.

|

||||

# You must put github_deploy_file into .secret_files.tar.enc

|

||||

# This is an ssh key added to your homebrew forumla repo.

|

||||

- |

|

||||

mkdir -p $HOME/.ssh

|

||||

declare -r SSH_FILE="$(mktemp -u $HOME/.ssh/XXXXX)"

|

||||

echo -e "Host github.com\n\tStrictHostKeyChecking no\n" >> $HOME/.ssh/config

|

||||

[ ! -f github_deploy_key ] || (mv github_deploy_key $SSH_FILE \

|

||||

&& chmod 600 "$SSH_FILE" \

|

||||

&& printf "%s\n" \

|

||||

"Host github.com" \

|

||||

" IdentityFile $SSH_FILE" \

|

||||

" StrictHostKeyChecking no" \

|

||||

" LogLevel ERROR" >> $HOME/.ssh/config)

|

||||

deploy:

|

||||

- provider: releases

|

||||

api_key:

|

||||

secure: GsvW0m+EnRELQMk8DjH63VXinqbwse4FJ4vNUslOE6CZ8PBXPrH0ZgaI7ic/uxRtm7CYj0sir4CZq62W5l6uhoXCCQfjOnmJspqnQcrFZ1xRdWktsNXaRwM6hlzaUThsJ/1PD9Psc66uKXBYTg0IlUz0yjZAZk7tCUE4libuj41z40ZKxUcbfcNvH4Njc9IpNB4QSA3ss+a9/6ZwBz4tHVamsGIrzaE0Zf99ItNBYvaOwhM2rC/NWIsFmwt8w4rIA2NIrkZgMDV+Z2Niqh4JRLAWCQNx/RjC5U52lG2yhqivUC3TromZ+q4O4alUltsyIzF2nVanLWgJmbeFo8uXT5A+gd3ovSkFLU9medXd9i4kap7kN/o5m9p5QZvrdEYHEmIU4ml5rjT2EQQVy5CtSmpiRAbhpEJIvA1wDtRq8rdz8IVfJXkHNjg2XdouNmMMWqa3OkEPw21+uxsqv4LscW/6ZjsavzL5SSdnBRU9n79EfGJE/tJLKiNumah/vLuJ5buNhgqmCdtX/Tg+DhQS1BOyYg4l4L8s9IIKZgFRwrOPsZnA/KsrWg4ZsjJ87cqKCaT/qs2EJx5odZcZWJYLBngeO8Tc6cQtLgJdieY2oEKo51Agq4rgikZDt21m6TY9/R5lPN0piwdpy3ZGKfv1ijXx74raMT03qskputzMCvc=

|

||||

overwrite: true

|

||||

skip_cleanup: true

|

||||

file_glob: true

|

||||

file: release/*

|

||||

on:

|

||||

tags: true

|

||||

- provider: script

|

||||

script: scripts/formula-deploy.sh

|

||||

on:

|

||||

tags: true

|

||||

- provider: script

|

||||

script: scripts/package-deploy.sh

|

||||

skip_cleanup: true

|

||||

on:

|

||||

all_branches: true

|

||||

condition: $TRAVIS_BRANCH =~ ^(master|v[0-9.]+)$

|

||||

|

|

@ -1,73 +0,0 @@

|

|||

_This doc is far from complete._

|

||||

|

||||

# Build Pipeline

|

||||

|

||||

Lets talk about how the software gets built for our users before we talk about

|

||||

making changes to it.

|

||||

|

||||

## TravisCI

|

||||

|

||||

This repo is tested, built and deployed by [Travis-CI](https://travis-ci.org/davidnewhall/unifi-poller).

|

||||

|

||||

The [.travis.yml](.travis.yml) file in this repo coordinates the entire process.

|

||||

As long as this document is kept up to date, this is what the travis file does:

|

||||

|

||||

- Creates a go-capable build environment on a Linux host, some debian variant.

|

||||

- Install ruby-devel to get rubygems.

|

||||

- Installs other build tools including rpm and fpm from rubygems.

|

||||

- Starts docker, builds the docker container and runs it.

|

||||

- Tests that the Docker container ran and produced expected output.

|

||||

- Makes a release. `make release`: This does a lot of things, controlled by the [Makefile](Makefile).

|

||||

- Runs go tests and go linters.

|

||||

- Compiles the application binaries for Windows, Linux and macOS.

|

||||

- Compiles a man page that goes into the packages.

|

||||

- Creates rpm and deb packages using fpm.

|

||||

- Puts the packages, gzipped binaries and files containing the SHA256s of each asset into a release folder.

|

||||

|

||||

After the release is built and Docker image tested:

|

||||

- Deploys the release assets to the tagged release on GitHub using an encrypted GitHub Token (api key).

|

||||

- Runs [another script](scripts/formula-deploy.sh) to create and upload a Homebrew formula to [golift/homebrew-mugs](https://github.com/golift/homebrew-mugs).

|

||||

- Uses an encrypted SSH key to upload the updated formula to the repo.

|

||||

- Travis does nothing else with Docker; it just makes sure the thing compiles and runs.

|

||||

|

||||

### Homebrew

|

||||

|

||||

it's a mac thing.

|

||||

|

||||

[Homebrew](https://brew.sh) is all I use at home. Please don't break the homebrew

|

||||

formula stuff; it took a lot of pain to get it just right. I am very interested

|

||||

in how it works for you.

|

||||

|

||||

### Docker

|

||||

|

||||

Docker is built automatically by Docker Cloud using the Dockerfile in the path

|

||||

[init/docker/Dockerfile](init/docker/Dockerfile). Some of the configuration is

|

||||

done in the Cloud service under my personal account `golift`, but the majority

|

||||

happens in the build files in the [init/docker/hooks/](init/docker/hooks/) directory.

|

||||

|

||||

If you have need to change the Dockerfile, please clearly explain what problem your

|

||||

changes are solving, and how it has been tested and validated. As far as I'm

|

||||

concerned this file should never need to change again, but I'm not a Docker expert;

|

||||

you're welcome to prove me wrong.

|

||||

|

||||

# Contributing

|

||||

|

||||

Make a pull request and tell me what you're fixing. Pretty simple. If I need to

|

||||

I'll add more "rules." For now I'm happy to have help. Thank you!

|

||||

|

||||

## Wiki

|

||||

|

||||

**If you see typos, errors, omissions, etc, please fix them.**

|

||||

|

||||

At this point, the wiki is pretty solid. Please keep your edits brief and without

|

||||

too much opinion. If you want to provide a way to do something, please also provide

|

||||

any alternatives you're aware of. If you're not sure, just open an issue and we can

|

||||

hash it out. I'm reasonable.

|

||||

|

||||

## UniFi Library

|

||||

|

||||

If you're trying to fix something in the UniFi data collection (ie. you got an

|

||||

unmarshal error, or you want to add something I didn't include) then you

|

||||

should look at the [UniFi library](https://github.com/golift/unifi). All the

|

||||

data collection and export code lives there. Contributions and Issues are welcome

|

||||

on that code base as well.

|

||||

|

|

@ -1,22 +0,0 @@

|

|||

MIT LICENSE.

|

||||

Copyright (c) 2016 Garrett Bjerkhoel

|

||||

Copyright (c) 2018-2019 David Newhall II

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining

|

||||

a copy of this software and associated documentation files (the

|

||||

"Software"), to deal in the Software without restriction, including

|

||||

without limitation the rights to use, copy, modify, merge, publish,

|

||||

distribute, sublicense, and/or sell copies of the Software, and to

|

||||

permit persons to whom the Software is furnished to do so, subject to

|

||||

the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be

|

||||

included in all copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND,

|

||||

EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF

|

||||

MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND

|

||||

NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE

|

||||

LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION

|

||||

OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION

|

||||

WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

||||

|

|

@ -1,311 +0,0 @@

|

|||

# This Makefile is written as generic as possible.

|

||||

# Setting the variables in .metadata.sh and creating the paths in the repo makes this work.

|

||||

# See more: https://github.com/golift/application-builder

|

||||

|

||||

# Suck in our application information.

|

||||

IGNORED:=$(shell bash -c "source .metadata.sh ; env | sed 's/=/:=/;s/^/export /' > .metadata.make")

|

||||

|

||||

# md2roff turns markdown into man files and html files.

|

||||

MD2ROFF_BIN=github.com/github/hub/md2roff-bin

|

||||

|

||||

|

||||

# Travis CI passes the version in. Local builds get it from the current git tag.

|

||||

ifeq ($(VERSION),)

|

||||

include .metadata.make

|

||||

else

|

||||

# Preserve the passed-in version & iteration (homebrew).

|

||||

_VERSION:=$(VERSION)

|

||||

_ITERATION:=$(ITERATION)

|

||||

include .metadata.make

|

||||

VERSION:=$(_VERSION)

|

||||

ITERATION:=$(_ITERATION)

|

||||

endif

|

||||

|

||||

# rpm is wierd and changes - to _ in versions.

|

||||

RPMVERSION:=$(shell echo $(VERSION) | tr -- - _)

|

||||

|

||||

PACKAGE_SCRIPTS=

|

||||

ifeq ($(FORMULA),service)

|

||||

PACKAGE_SCRIPTS=--after-install scripts/after-install.sh --before-remove scripts/before-remove.sh

|

||||

endif

|

||||

|

||||

define PACKAGE_ARGS

|

||||

$(PACKAGE_SCRIPTS) \

|

||||

--name $(BINARY) \

|

||||

--deb-no-default-config-files \

|

||||

--rpm-os linux \

|

||||

--iteration $(ITERATION) \

|

||||

--license $(LICENSE) \

|

||||

--url $(URL) \

|

||||

--maintainer "$(MAINT)" \

|

||||

--vendor "$(VENDOR)" \

|

||||

--description "$(DESC)" \

|

||||

--config-files "/etc/$(BINARY)/$(CONFIG_FILE)"

|

||||

endef

|

||||

|

||||

PLUGINS:=$(patsubst plugins/%/main.go,%,$(wildcard plugins/*/main.go))

|

||||

|

||||

VERSION_LDFLAGS:= \

|

||||

-X github.com/prometheus/common/version.Branch=$(TRAVIS_BRANCH) \

|

||||

-X github.com/prometheus/common/version.BuildDate=$(DATE) \

|

||||

-X github.com/prometheus/common/version.Revision=$(COMMIT) \

|

||||

-X github.com/prometheus/common/version.Version=$(VERSION)-$(ITERATION)

|

||||

|

||||

# Makefile targets follow.

|

||||

|

||||

all: build

|

||||

|

||||

# Prepare a release. Called in Travis CI.

|

||||

release: clean macos windows linux_packages

|

||||

# Prepareing a release!

|

||||

mkdir -p $@

|

||||

mv $(BINARY).*.macos $(BINARY).*.linux $@/

|

||||

gzip -9r $@/

|

||||

for i in $(BINARY)*.exe; do zip -9qm $@/$$i.zip $$i;done

|

||||

mv *.rpm *.deb $@/

|

||||

# Generating File Hashes

|

||||

openssl dgst -r -sha256 $@/* | sed 's#release/##' | tee $@/checksums.sha256.txt

|

||||

|

||||

|

||||

# Delete all build assets.

|

||||

clean:

|

||||

# Cleaning up.

|

||||

rm -f $(BINARY) $(BINARY).*.{macos,linux,exe}{,.gz,.zip} $(BINARY).1{,.gz} $(BINARY).rb

|

||||

rm -f $(BINARY){_,-}*.{deb,rpm} v*.tar.gz.sha256 examples/MANUAL .metadata.make

|

||||

rm -f cmd/$(BINARY)/README{,.html} README{,.html} ./$(BINARY)_manual.html

|

||||

rm -rf package_build_* release

|

||||

|

||||

# Build a man page from a markdown file using md2roff.

|

||||

# This also turns the repo readme into an html file.

|

||||

# md2roff is needed to build the man file and html pages from the READMEs.

|

||||

man: $(BINARY).1.gz

|

||||

$(BINARY).1.gz: md2roff

|

||||

# Building man page. Build dependency first: md2roff

|

||||

go run $(MD2ROFF_BIN) --manual $(BINARY) --version $(VERSION) --date "$(DATE)" examples/MANUAL.md

|

||||

gzip -9nc examples/MANUAL > $@

|

||||

mv examples/MANUAL.html $(BINARY)_manual.html

|

||||

|

||||

md2roff:

|

||||

go get $(MD2ROFF_BIN)

|

||||

|

||||

# TODO: provide a template that adds the date to the built html file.

|

||||

readme: README.html

|

||||

README.html: md2roff

|

||||

# This turns README.md into README.html

|

||||

go run $(MD2ROFF_BIN) --manual $(BINARY) --version $(VERSION) --date "$(DATE)" README.md

|

||||

|

||||

# Binaries

|

||||

|

||||

build: $(BINARY)

|

||||

$(BINARY): main.go pkg/*/*.go

|

||||

go build -o $(BINARY) -ldflags "-w -s $(VERSION_LDFLAGS)"

|

||||

|

||||

linux: $(BINARY).amd64.linux

|

||||

$(BINARY).amd64.linux: main.go pkg/*/*.go

|

||||

# Building linux 64-bit x86 binary.

|

||||

GOOS=linux GOARCH=amd64 go build -o $@ -ldflags "-w -s $(VERSION_LDFLAGS)"

|

||||

|

||||

linux386: $(BINARY).i386.linux

|

||||

$(BINARY).i386.linux: main.go pkg/*/*.go

|

||||

# Building linux 32-bit x86 binary.

|

||||

GOOS=linux GOARCH=386 go build -o $@ -ldflags "-w -s $(VERSION_LDFLAGS)"

|

||||

|

||||

arm: arm64 armhf

|

||||

|

||||

arm64: $(BINARY).arm64.linux

|

||||

$(BINARY).arm64.linux: main.go pkg/*/*.go

|

||||

# Building linux 64-bit ARM binary.

|

||||

GOOS=linux GOARCH=arm64 go build -o $@ -ldflags "-w -s $(VERSION_LDFLAGS)"

|

||||

|

||||

armhf: $(BINARY).armhf.linux

|

||||

$(BINARY).armhf.linux: main.go pkg/*/*.go

|

||||

# Building linux 32-bit ARM binary.

|

||||

GOOS=linux GOARCH=arm GOARM=6 go build -o $@ -ldflags "-w -s $(VERSION_LDFLAGS)"

|

||||

|

||||

macos: $(BINARY).amd64.macos

|

||||

$(BINARY).amd64.macos: main.go pkg/*/*.go

|

||||

# Building darwin 64-bit x86 binary.

|

||||

GOOS=darwin GOARCH=amd64 go build -o $@ -ldflags "-w -s $(VERSION_LDFLAGS)"

|

||||

|

||||

exe: $(BINARY).amd64.exe

|

||||

windows: $(BINARY).amd64.exe

|

||||

$(BINARY).amd64.exe: main.go pkg/*/*.go

|

||||

# Building windows 64-bit x86 binary.

|

||||

GOOS=windows GOARCH=amd64 go build -o $@ -ldflags "-w -s $(VERSION_LDFLAGS)"

|

||||

|

||||

# Packages

|

||||

|

||||

linux_packages: rpm deb rpm386 deb386 debarm rpmarm debarmhf rpmarmhf

|

||||

|

||||

rpm: $(BINARY)-$(RPMVERSION)-$(ITERATION).x86_64.rpm

|

||||

$(BINARY)-$(RPMVERSION)-$(ITERATION).x86_64.rpm: package_build_linux check_fpm

|

||||

@echo "Building 'rpm' package for $(BINARY) version '$(RPMVERSION)-$(ITERATION)'."

|

||||

fpm -s dir -t rpm $(PACKAGE_ARGS) -a x86_64 -v $(RPMVERSION) -C $<

|

||||

[ "$(SIGNING_KEY)" == "" ] || expect -c "spawn rpmsign --key-id=$(SIGNING_KEY) --resign $(BINARY)-$(RPMVERSION)-$(ITERATION).x86_64.rpm; expect -exact \"Enter pass phrase: \"; send \"$(PRIVATE_KEY)\r\"; expect eof"

|

||||

|

||||

deb: $(BINARY)_$(VERSION)-$(ITERATION)_amd64.deb

|

||||

$(BINARY)_$(VERSION)-$(ITERATION)_amd64.deb: package_build_linux check_fpm

|

||||

@echo "Building 'deb' package for $(BINARY) version '$(VERSION)-$(ITERATION)'."

|

||||

fpm -s dir -t deb $(PACKAGE_ARGS) -a amd64 -v $(VERSION) -C $<

|

||||

[ "$(SIGNING_KEY)" == "" ] || expect -c "spawn debsigs --default-key="$(SIGNING_KEY)" --sign=origin $(BINARY)_$(VERSION)-$(ITERATION)_amd64.deb; expect -exact \"Enter passphrase: \"; send \"$(PRIVATE_KEY)\r\"; expect eof"

|

||||

|

||||

rpm386: $(BINARY)-$(RPMVERSION)-$(ITERATION).i386.rpm

|

||||

$(BINARY)-$(RPMVERSION)-$(ITERATION).i386.rpm: package_build_linux_386 check_fpm

|

||||

@echo "Building 32-bit 'rpm' package for $(BINARY) version '$(RPMVERSION)-$(ITERATION)'."

|

||||

fpm -s dir -t rpm $(PACKAGE_ARGS) -a i386 -v $(RPMVERSION) -C $<

|

||||

[ "$(SIGNING_KEY)" == "" ] || expect -c "spawn rpmsign --key-id=$(SIGNING_KEY) --resign $(BINARY)-$(RPMVERSION)-$(ITERATION).i386.rpm; expect -exact \"Enter pass phrase: \"; send \"$(PRIVATE_KEY)\r\"; expect eof"

|

||||

|

||||

deb386: $(BINARY)_$(VERSION)-$(ITERATION)_i386.deb

|

||||

$(BINARY)_$(VERSION)-$(ITERATION)_i386.deb: package_build_linux_386 check_fpm

|

||||

@echo "Building 32-bit 'deb' package for $(BINARY) version '$(VERSION)-$(ITERATION)'."

|

||||

fpm -s dir -t deb $(PACKAGE_ARGS) -a i386 -v $(VERSION) -C $<

|

||||

[ "$(SIGNING_KEY)" == "" ] || expect -c "spawn debsigs --default-key="$(SIGNING_KEY)" --sign=origin $(BINARY)_$(VERSION)-$(ITERATION)_i386.deb; expect -exact \"Enter passphrase: \"; send \"$(PRIVATE_KEY)\r\"; expect eof"

|

||||

|

||||

rpmarm: $(BINARY)-$(RPMVERSION)-$(ITERATION).arm64.rpm

|

||||

$(BINARY)-$(RPMVERSION)-$(ITERATION).arm64.rpm: package_build_linux_arm64 check_fpm

|

||||

@echo "Building 64-bit ARM8 'rpm' package for $(BINARY) version '$(RPMVERSION)-$(ITERATION)'."

|

||||

fpm -s dir -t rpm $(PACKAGE_ARGS) -a arm64 -v $(RPMVERSION) -C $<

|

||||

[ "$(SIGNING_KEY)" == "" ] || expect -c "spawn rpmsign --key-id=$(SIGNING_KEY) --resign $(BINARY)-$(RPMVERSION)-$(ITERATION).arm64.rpm; expect -exact \"Enter pass phrase: \"; send \"$(PRIVATE_KEY)\r\"; expect eof"

|

||||

|

||||

debarm: $(BINARY)_$(VERSION)-$(ITERATION)_arm64.deb

|

||||

$(BINARY)_$(VERSION)-$(ITERATION)_arm64.deb: package_build_linux_arm64 check_fpm

|

||||

@echo "Building 64-bit ARM8 'deb' package for $(BINARY) version '$(VERSION)-$(ITERATION)'."

|

||||

fpm -s dir -t deb $(PACKAGE_ARGS) -a arm64 -v $(VERSION) -C $<

|

||||

[ "$(SIGNING_KEY)" == "" ] || expect -c "spawn debsigs --default-key="$(SIGNING_KEY)" --sign=origin $(BINARY)_$(VERSION)-$(ITERATION)_arm64.deb; expect -exact \"Enter passphrase: \"; send \"$(PRIVATE_KEY)\r\"; expect eof"

|

||||

|

||||

rpmarmhf: $(BINARY)-$(RPMVERSION)-$(ITERATION).armhf.rpm

|

||||

$(BINARY)-$(RPMVERSION)-$(ITERATION).armhf.rpm: package_build_linux_armhf check_fpm

|

||||

@echo "Building 32-bit ARM6/7 HF 'rpm' package for $(BINARY) version '$(RPMVERSION)-$(ITERATION)'."

|

||||

fpm -s dir -t rpm $(PACKAGE_ARGS) -a armhf -v $(RPMVERSION) -C $<

|

||||

[ "$(SIGNING_KEY)" == "" ] || expect -c "spawn rpmsign --key-id=$(SIGNING_KEY) --resign $(BINARY)-$(RPMVERSION)-$(ITERATION).armhf.rpm; expect -exact \"Enter pass phrase: \"; send \"$(PRIVATE_KEY)\r\"; expect eof"

|

||||

|

||||

debarmhf: $(BINARY)_$(VERSION)-$(ITERATION)_armhf.deb

|

||||

$(BINARY)_$(VERSION)-$(ITERATION)_armhf.deb: package_build_linux_armhf check_fpm

|

||||

@echo "Building 32-bit ARM6/7 HF 'deb' package for $(BINARY) version '$(VERSION)-$(ITERATION)'."

|

||||

fpm -s dir -t deb $(PACKAGE_ARGS) -a armhf -v $(VERSION) -C $<

|

||||

[ "$(SIGNING_KEY)" == "" ] || expect -c "spawn debsigs --default-key="$(SIGNING_KEY)" --sign=origin $(BINARY)_$(VERSION)-$(ITERATION)_armhf.deb; expect -exact \"Enter passphrase: \"; send \"$(PRIVATE_KEY)\r\"; expect eof"

|

||||

|

||||

# Build an environment that can be packaged for linux.

|

||||

package_build_linux: readme man plugins_linux_amd64 linux

|

||||

# Building package environment for linux.

|

||||

mkdir -p $@/usr/bin $@/etc/$(BINARY) $@/usr/share/man/man1 $@/usr/share/doc/$(BINARY) $@/usr/lib/$(BINARY)

|

||||

# Copying the binary, config file, unit file, and man page into the env.

|

||||

cp $(BINARY).amd64.linux $@/usr/bin/$(BINARY)

|

||||

cp *.1.gz $@/usr/share/man/man1

|

||||

rm -f $@/usr/lib/$(BINARY)/*.so

|

||||

cp *amd64.so $@/usr/lib/$(BINARY)/

|

||||

cp examples/$(CONFIG_FILE).example $@/etc/$(BINARY)/

|

||||

cp examples/$(CONFIG_FILE).example $@/etc/$(BINARY)/$(CONFIG_FILE)

|

||||

cp LICENSE *.html examples/*?.?* $@/usr/share/doc/$(BINARY)/

|

||||

[ "$(FORMULA)" != "service" ] || mkdir -p $@/lib/systemd/system

|

||||

[ "$(FORMULA)" != "service" ] || \

|

||||

sed -e "s/{{BINARY}}/$(BINARY)/g" -e "s/{{DESC}}/$(DESC)/g" \

|

||||

init/systemd/template.unit.service > $@/lib/systemd/system/$(BINARY).service

|

||||

|

||||

package_build_linux_386: package_build_linux linux386

|

||||

mkdir -p $@

|

||||

cp -r $</* $@/

|

||||

cp $(BINARY).i386.linux $@/usr/bin/$(BINARY)

|

||||

|

||||

package_build_linux_arm64: package_build_linux arm64

|

||||

mkdir -p $@

|

||||

cp -r $</* $@/

|

||||

cp $(BINARY).arm64.linux $@/usr/bin/$(BINARY)

|

||||

|

||||

package_build_linux_armhf: package_build_linux armhf

|

||||

mkdir -p $@

|

||||

cp -r $</* $@/

|

||||

cp $(BINARY).armhf.linux $@/usr/bin/$(BINARY)

|

||||

|

||||

check_fpm:

|

||||

@fpm --version > /dev/null || (echo "FPM missing. Install FPM: https://fpm.readthedocs.io/en/latest/installing.html" && false)

|

||||

|

||||

docker:

|

||||

docker build -f init/docker/Dockerfile \

|

||||

--build-arg "BUILD_DATE=$(DATE)" \

|

||||

--build-arg "COMMIT=$(COMMIT)" \

|

||||

--build-arg "VERSION=$(VERSION)-$(ITERATION)" \

|

||||

--build-arg "LICENSE=$(LICENSE)" \

|

||||

--build-arg "DESC=$(DESC)" \

|

||||

--build-arg "URL=$(URL)" \

|

||||

--build-arg "VENDOR=$(VENDOR)" \

|

||||

--build-arg "AUTHOR=$(MAINT)" \

|

||||

--build-arg "BINARY=$(BINARY)" \

|

||||

--build-arg "SOURCE_URL=$(SOURCE_URL)" \

|

||||

--build-arg "CONFIG_FILE=$(CONFIG_FILE)" \

|

||||

--tag $(BINARY) .

|

||||

|

||||

# This builds a Homebrew formula file that can be used to install this app from source.

|

||||

# The source used comes from the released version on GitHub. This will not work with local source.

|

||||

# This target is used by Travis CI to update the released Forumla when a new tag is created.

|

||||

formula: $(BINARY).rb

|

||||

v$(VERSION).tar.gz.sha256:

|

||||

# Calculate the SHA from the Github source file.

|

||||

curl -sL $(URL)/archive/v$(VERSION).tar.gz | openssl dgst -r -sha256 | tee $@

|

||||

$(BINARY).rb: v$(VERSION).tar.gz.sha256 init/homebrew/$(FORMULA).rb.tmpl

|

||||

# Creating formula from template using sed.

|

||||

sed -e "s/{{Version}}/$(VERSION)/g" \

|

||||

-e "s/{{Iter}}/$(ITERATION)/g" \

|

||||

-e "s/{{SHA256}}/$(shell head -c64 $<)/g" \

|

||||

-e "s/{{Desc}}/$(DESC)/g" \

|

||||

-e "s%{{URL}}%$(URL)%g" \

|

||||

-e "s%{{SOURCE_PATH}}%$(SOURCE_PATH)%g" \

|

||||

-e "s%{{SOURCE_URL}}%$(SOURCE_URL)%g" \

|

||||

-e "s%{{CONFIG_FILE}}%$(CONFIG_FILE)%g" \

|

||||

-e "s%{{Class}}%$(shell echo $(BINARY) | perl -pe 's/(?:\b|-)(\p{Ll})/\u$$1/g')%g" \

|

||||

init/homebrew/$(FORMULA).rb.tmpl | tee $(BINARY).rb

|

||||

# That perl line turns hello-world into HelloWorld, etc.

|

||||

|

||||

plugins: $(patsubst %,%.so,$(PLUGINS))

|

||||

$(patsubst %,%.so,$(PLUGINS)):

|

||||

go build -o $@ -ldflags "$(VERSION_LDFLAGS)" -buildmode=plugin ./plugins/$(patsubst %.so,%,$@)

|

||||

|

||||

linux_plugins: plugins_linux_amd64 plugins_linux_i386 plugins_linux_arm64 plugins_linux_armhf

|

||||

plugins_linux_amd64: $(patsubst %,%.linux_amd64.so,$(PLUGINS))

|

||||

$(patsubst %,%.linux_amd64.so,$(PLUGINS)):

|

||||

GOOS=linux GOARCH=amd64 go build -o $@ -ldflags "$(VERSION_LDFLAGS)" -buildmode=plugin ./plugins/$(patsubst %.linux_amd64.so,%,$@)

|

||||

|

||||

plugins_darwin: $(patsubst %,%.darwin.so,$(PLUGINS))

|

||||

$(patsubst %,%.darwin.so,$(PLUGINS)):

|

||||

GOOS=darwin go build -o $@ -ldflags "$(VERSION_LDFLAGS)" -buildmode=plugin ./plugins/$(patsubst %.darwin.so,%,$@)

|

||||

|

||||

# Extras

|

||||

|

||||

# Run code tests and lint.

|

||||

test: lint

|

||||

# Testing.

|

||||

go test -race -covermode=atomic ./...

|

||||

lint:

|

||||

# Checking lint.

|

||||

golangci-lint run $(GOLANGCI_LINT_ARGS)

|

||||

|

||||

# This is safe; recommended even.

|

||||

dep: vendor

|

||||

vendor: go.mod go.sum

|

||||

go mod vendor

|

||||

|

||||

# Don't run this unless you're ready to debug untested vendored dependencies.

|

||||

deps: update vendor

|

||||

update:

|

||||

go get -u -d

|

||||

|

||||

# Homebrew stuff. macOS only.

|

||||

|

||||

# Used for Homebrew only. Other distros can create packages.

|

||||

install: man readme $(BINARY) plugins_darwin

|

||||

@echo - Done Building! -

|

||||

@echo - Local installation with the Makefile is only supported on macOS.

|

||||

@echo If you wish to install the application manually on Linux, check out the wiki: https://$(SOURCE_URL)/wiki/Installation

|

||||

@echo - Otherwise, build and install a package: make rpm -or- make deb

|

||||

@echo See the Package Install wiki for more info: https://$(SOURCE_URL)/wiki/Package-Install

|

||||

@[ "$(shell uname)" = "Darwin" ] || (echo "Unable to continue, not a Mac." && false)

|

||||

@[ "$(PREFIX)" != "" ] || (echo "Unable to continue, PREFIX not set. Use: make install PREFIX=/usr/local ETC=/usr/local/etc" && false)

|

||||

@[ "$(ETC)" != "" ] || (echo "Unable to continue, ETC not set. Use: make install PREFIX=/usr/local ETC=/usr/local/etc" && false)

|

||||

# Copying the binary, config file, unit file, and man page into the env.

|

||||

/usr/bin/install -m 0755 -d $(PREFIX)/bin $(PREFIX)/share/man/man1 $(ETC)/$(BINARY) $(PREFIX)/share/doc/$(BINARY) $(PREFIX)/lib/$(BINARY)

|

||||

/usr/bin/install -m 0755 -cp $(BINARY) $(PREFIX)/bin/$(BINARY)

|

||||

/usr/bin/install -m 0755 -cp *darwin.so $(PREFIX)/lib/$(BINARY)/

|

||||

/usr/bin/install -m 0644 -cp $(BINARY).1.gz $(PREFIX)/share/man/man1

|

||||

/usr/bin/install -m 0644 -cp examples/$(CONFIG_FILE).example $(ETC)/$(BINARY)/

|

||||

[ -f $(ETC)/$(BINARY)/$(CONFIG_FILE) ] || /usr/bin/install -m 0644 -cp examples/$(CONFIG_FILE).example $(ETC)/$(BINARY)/$(CONFIG_FILE)

|

||||

/usr/bin/install -m 0644 -cp LICENSE *.html examples/* $(PREFIX)/share/doc/$(BINARY)/

|

||||

|

|

@ -1,133 +1,4 @@

|

|||

<img width="320px" src="https://raw.githubusercontent.com/wiki/davidnewhall/unifi-poller/images/unifi-poller-logo.png">

|

||||

# prometheus

|

||||

|

||||

[](https://discord.gg/KnyKYt2)

|

||||

[](https://twitter.com/TwitchCaptain)

|

||||

[](http://grafana.com/dashboards?search=unifi-poller)

|

||||

[](https://hub.docker.com/r/golift/unifi-poller)

|

||||

[](https://www.somsubhra.com/github-release-stats/?username=davidnewhall&repository=unifi-poller)

|

||||

|

||||

[](https://github.com/golift/unifi)

|

||||

[](https://github.com/golift/application-builder)

|

||||

[](https://github.com/davidnewhall/unifi-poller)

|

||||

[](https://travis-ci.org/davidnewhall/unifi-poller)

|

||||

|

||||

Collect your UniFi controller data and report it to an InfluxDB instance,

|

||||

or export it for Prometheus collection. Prometheus support is

|

||||

[new](https://github.com/davidnewhall/unifi-poller/issues/88), and much

|

||||

of the documentation still needs to be updated; 12/2/2019.

|

||||

[Ten Grafana Dashboards](http://grafana.com/dashboards?search=unifi-poller)

|

||||

included; with screenshots. Five for InfluxDB and five for Prometheus.

|

||||

|

||||

## Installation

|

||||

[See the Wiki!](https://github.com/davidnewhall/unifi-poller/wiki/Installation)

|

||||

We have a special place for [Docker Users](https://github.com/davidnewhall/unifi-poller/wiki/Docker).

|

||||

I'm willing to help if you have troubles.

|

||||

Open an [Issue](https://github.com/davidnewhall/unifi-poller/issues) and

|

||||

we'll figure out how to get things working for you. You can also get help in

|

||||

the #unifi-poller channel on the [Ubiquiti Discord server](https://discord.gg/KnyKYt2).

|

||||

I've also [provided a forum post](https://community.ui.com/questions/Unifi-Poller-Store-Unifi-Controller-Metrics-in-InfluxDB-without-SNMP/58a0ea34-d2b3-41cd-93bb-d95d3896d1a1) you may use to get additional help.

|

||||

|

||||

## Description

|

||||

[Ubiquiti](https://www.ui.com) makes networking devices like switches, gateways

|

||||

(routers) and wireless access points. They have a line of equipment named

|

||||

[UniFi](https://www.ui.com/products/#unifi) that uses a

|

||||

[controller](https://www.ui.com/download/unifi/) to keep stats and simplify network

|

||||

device configuration. This controller can be installed on Windows, macOS and Linux.

|

||||

Ubiquiti also provides a dedicated hardware device called a

|

||||

[CloudKey](https://www.ui.com/unifi/unifi-cloud-key/) that runs the controller software. More recently they've developed the Dream Machine; it's still in

|

||||

beta / early access, but UniFi Poller can collect its data!

|

||||

|

||||

UniFi Poller is a small Golang application that runs on Windows, macOS, Linux or

|

||||

Docker. In Influx-mode it polls a UniFi controller every 30 seconds for

|

||||

measurements and exports the data to an Influx database. In Prometheus mode the

|

||||

poller opens a web port and accepts Prometheus polling. It converts the UniFi

|

||||

Controller API data into Prometheus exports on the fly.

|

||||

|

||||

This application requires your controller to be running all the time. If you run

|

||||

a UniFi controller, there's no excuse not to install

|

||||

[Influx](https://github.com/davidnewhall/unifi-poller/wiki/InfluxDB) or

|

||||

[Prometheus](https://prometheus.io),

|

||||

[Grafana](https://github.com/davidnewhall/unifi-poller/wiki/Grafana) and this app.

|

||||

You'll have a plethora of data at your fingertips and the ability to craft custom

|

||||

graphs to slice the data any way you choose. Good luck!

|

||||

|

||||

## Backstory

|

||||

I found a simple piece of code on GitHub that sorta did what I needed;

|

||||

we all know that story. I wanted more data, so I added more data collection.

|

||||

I believe I've completely rewritten every piece of original code, except the

|

||||

copyright/license file and that's fine with me. I probably wouldn't have made

|

||||

it this far if [Garrett](https://github.com/dewski/unifi) hadn't written the

|

||||

original code I started with. Many props my man.

|

||||

|

||||

The original code pulled only the client data. This app now pulls data

|

||||

for clients, access points, security gateways, dream machines and switches. I

|

||||

used to own two UAP-AC-PROs, one USG-3 and one US-24-250W, but have since upgraded

|

||||

a few devices. Many other users have also provided feedback to improve this app,

|

||||

and we have reports of it working on nearly every switch, AP and gateway.

|

||||

|

||||

## What's this data good for?

|

||||

I've been trying to get my UAP data into Grafana. Sure, google search that.

|

||||

You'll find [this](https://community.ubnt.com/t5/UniFi-Wireless/Grafana-dashboard-for-UniFi-APs-now-available/td-p/1833532). What if you don't want to deal with SNMP?

|

||||

Well, here you go. I've replicated 400% of what you see on those SNMP-powered

|

||||

dashboards with this Go app running on the same mac as my UniFi controller.

|

||||

All without enabling SNMP nor trying to understand those OIDs. Mad props

|

||||

to [waterside](https://community.ubnt.com/t5/user/viewprofilepage/user-id/303058)

|

||||

for making this dashboard; it gave me a fantastic start to making my own dashboards.

|

||||

|

||||

## Operation

|

||||

You can control this app with puppet, chef, saltstack, homebrew or a simple bash

|

||||

script if you needed to. Packages are available for macOS, Linux and Docker.

|

||||

It comes with a systemd service unit that allows you automatically start it up on most Linux hosts.

|

||||

It works just fine on [Windows](https://github.com/davidnewhall/unifi-poller/wiki/Windows) too.

|

||||

Most people prefer Docker, and this app is right at home in that environment.

|

||||

|

||||

## Development

|

||||

The UniFi data extraction is provided as an [external library](https://godoc.org/golift.io/unifi),

|

||||

and you can import that code directly without futzing with this application. That

|

||||

means, if you wanted to do something like make telegraf collect your data instead

|

||||

of UniFi Poller you can achieve that with a little bit of Go code. You could write

|

||||

a small app that acts as a telegraf input plugin using the [unifi](https://github.com/golift/unifi)

|

||||

library to grab the data from your controller. As a bonus, all of the code in UniFi Poller is

|

||||

[in libraries](https://godoc.org/github.com/davidnewhall/unifi-poller/pkg)

|

||||

and can be used in other projects.

|

||||

|

||||

## What's it look like?

|

||||

|

||||

There are five total dashboards available. Below you'll find screenshots of a few.

|

||||

|

||||

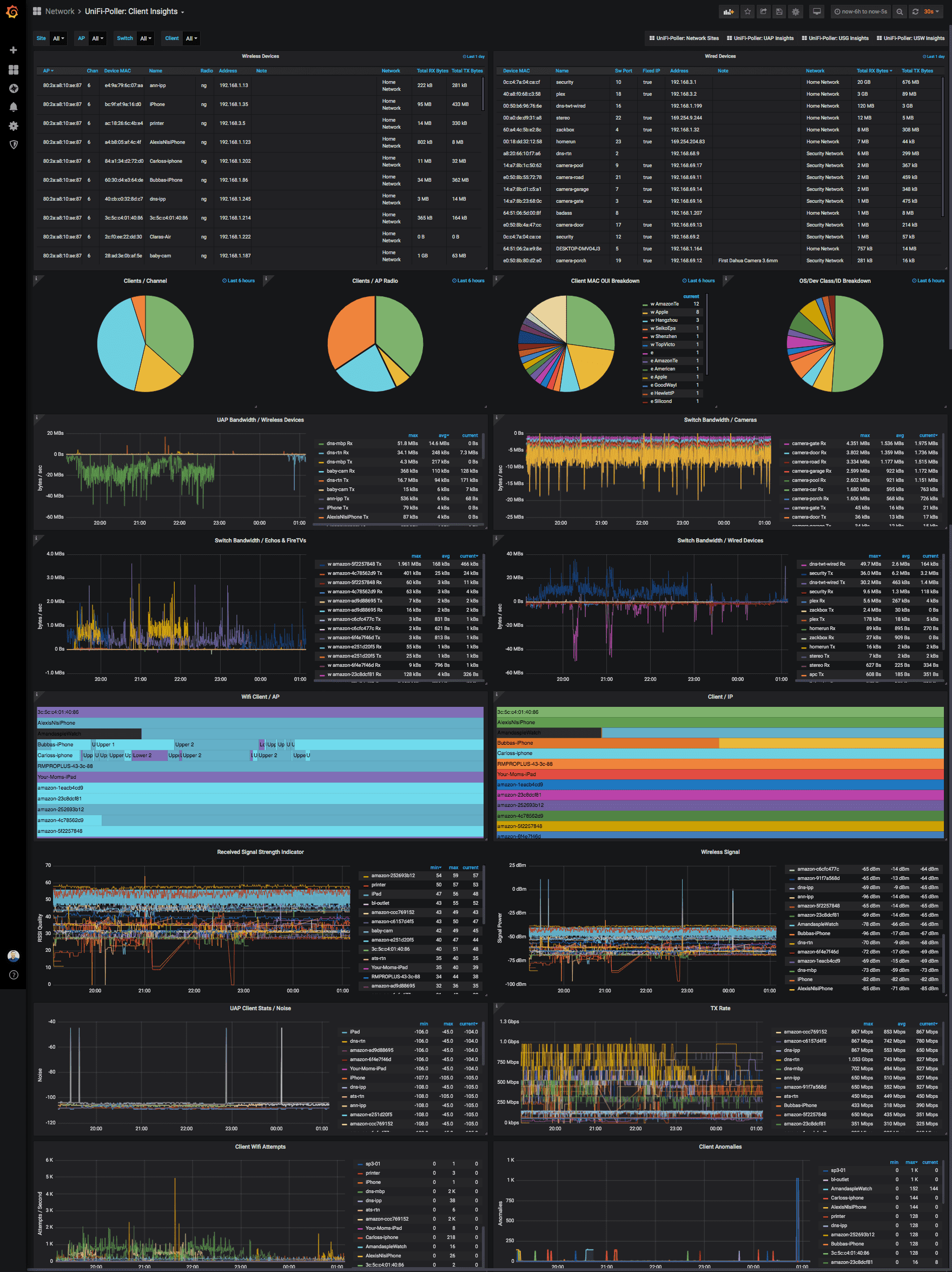

##### Client Dashboard (InfluxDB)

|

||||

|

||||

|

||||

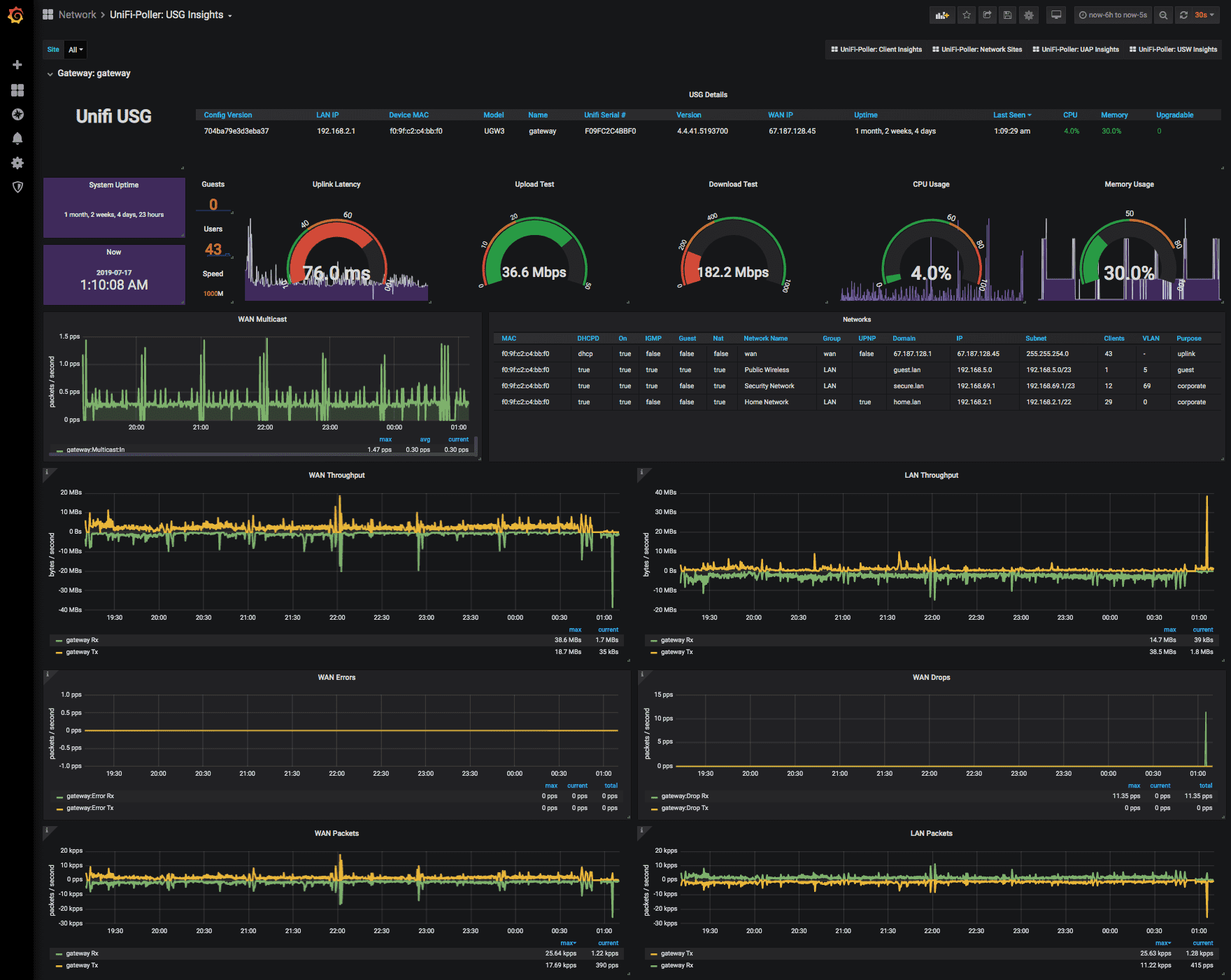

##### USG Dashboard (InfluxDB)

|

||||

|

||||

|

||||

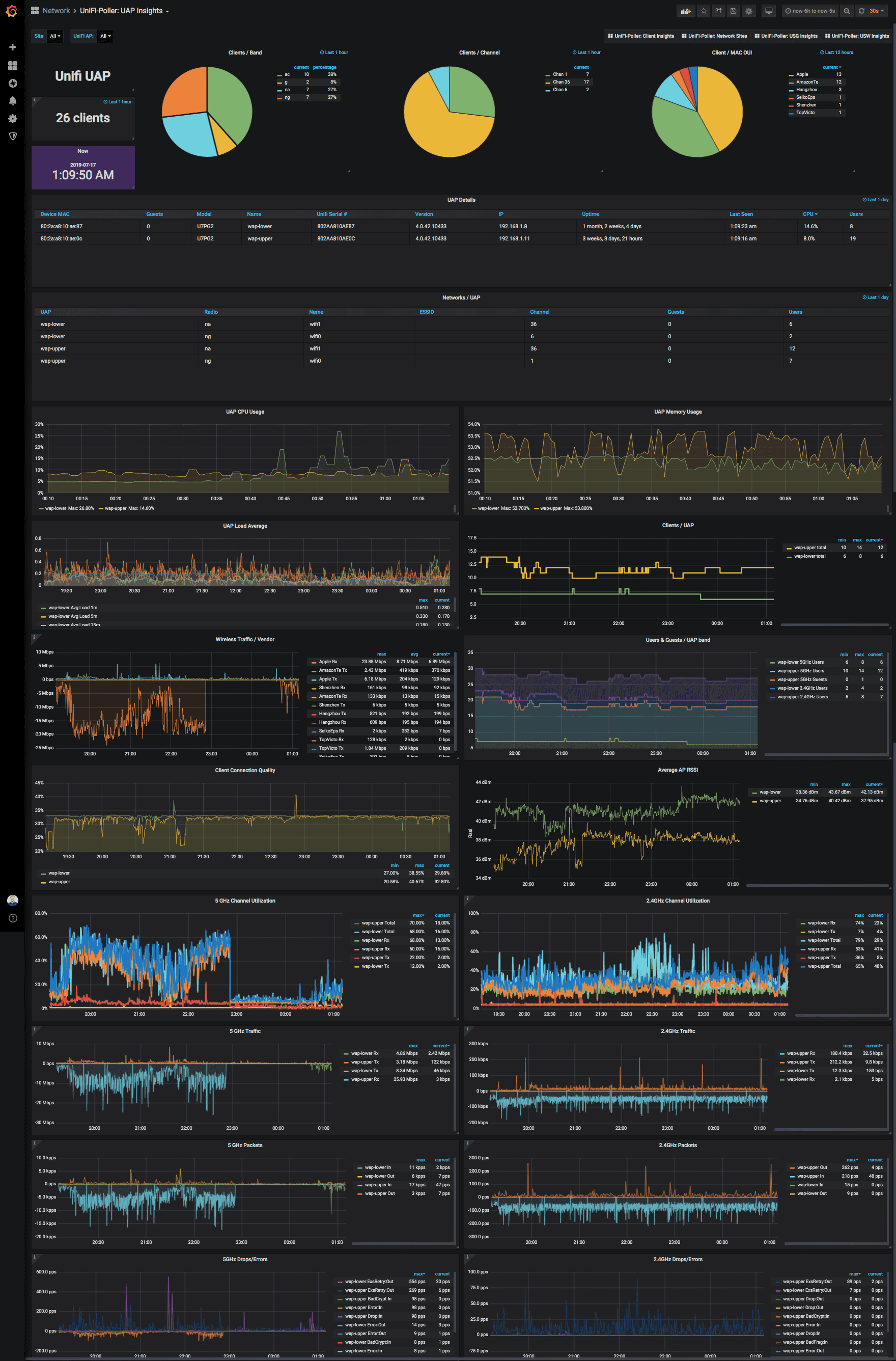

##### UAP Dashboard (InfluxDB)

|

||||

|

||||

|

||||

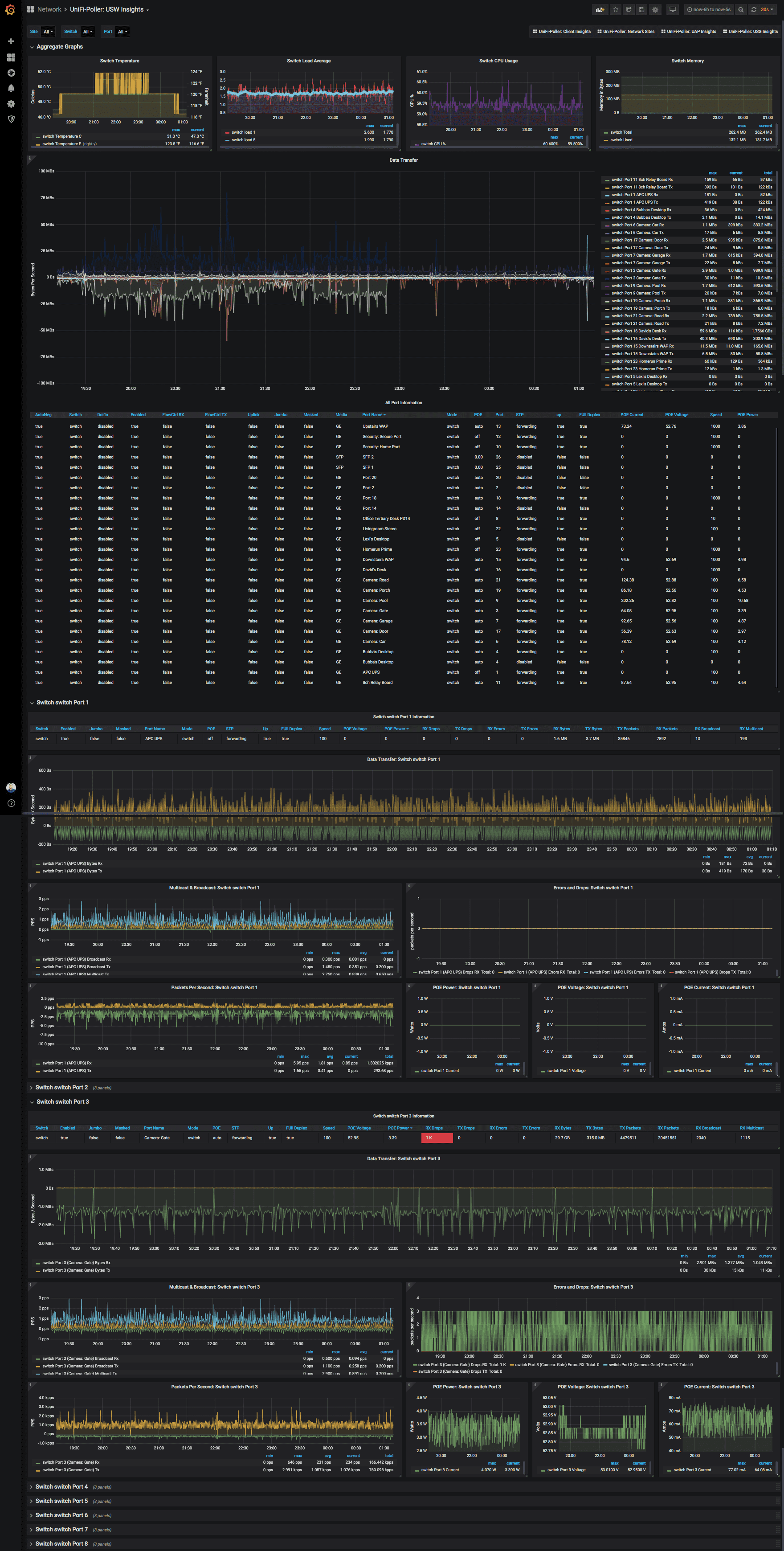

##### USW / Switch Dashboard (InfluxDB)

|

||||

You can drill down into specific sites, switches, and ports. Compare ports in different

|

||||

sites side-by-side. So easy! This screenshot barely does it justice.

|

||||

|

||||

|

||||

## Integrations

|

||||

|

||||

The following fine folks are providing their services, completely free! These service

|

||||

integrations are used for things like storage, building, compiling, distribution and

|

||||

documentation support. This project succeeds because of them. Thank you!

|

||||

|

||||

<p style="text-align: center;">

|

||||

<a title="Jfrog Bintray" alt="Jfrog Bintray" href="https://bintray.com"><img src="https://docs.golift.io/integrations/bintray.png"/></a>

|

||||

<a title="GitHub" alt="GitHub" href="https://GitHub.com"><img src="https://docs.golift.io/integrations/octocat.png"/></a>

|

||||

<a title="Docker Cloud" alt="Docker" href="https://cloud.docker.com"><img src="https://docs.golift.io/integrations/docker.png"/></a>

|

||||

<a title="Travis-CI" alt="Travis-CI" href="https://Travis-CI.com"><img src="https://docs.golift.io/integrations/travis-ci.png"/></a>

|

||||

<a title="Homebrew" alt="Homebrew" href="https://brew.sh"><img src="https://docs.golift.io/integrations/homebrew.png"/></a>

|

||||

<a title="Go Lift" alt="Go Lift" href="https://golift.io"><img src="https://docs.golift.io/integrations/golift.png"/></a>

|

||||

<a title="Grafana" alt="Grafana" href="https://grafana.com"><img src="https://docs.golift.io/integrations/grafana.png"/></a>

|

||||

</p>

|

||||

|

||||

## Copyright & License

|

||||

<img style="float: right;" align="right" width="200px" src="https://raw.githubusercontent.com/wiki/davidnewhall/unifi-poller/images/unifi-poller-logo.png">

|

||||

|

||||

- Copyright © 2016 Garrett Bjerkhoel.

|

||||

- Copyright © 2018-2019 David Newhall II.

|

||||

- See [LICENSE](LICENSE) for license information.

|

||||

This package provides the interface to turn UniFi measurements into prometheus

|

||||

exported metrics. Requires the poller package for actual UniFi data collection.

|

||||

|

|

|

|||

|

|

@ -9,10 +9,10 @@ import (

|

|||

"sync"

|

||||

"time"

|

||||

|

||||

"github.com/davidnewhall/unifi-poller/pkg/poller"

|

||||

"github.com/prometheus/client_golang/prometheus"

|

||||

"github.com/prometheus/client_golang/prometheus/promhttp"

|

||||

"github.com/prometheus/common/version"

|

||||

"github.com/unifi-poller/poller"

|

||||

"golift.io/unifi"

|

||||

)

|

||||

|

||||

|

|

@ -1,101 +0,0 @@

|

|||

unifi-poller(1) -- Utility to poll UniFi Controller Metrics and store them in InfluxDB

|

||||

===

|

||||

|

||||

SYNOPSIS

|

||||

---

|

||||

`unifi-poller -c /etc/unifi-poller.conf`

|

||||

|

||||

This daemon polls a UniFi controller at a short interval and stores the collected

|

||||

measurements in an Influx Database. The measurements and metrics collected belong

|

||||

to every available site, device and client found on the controller. Including

|

||||

UniFi Security Gateways, Access Points, Switches and possibly more.

|

||||

|

||||

Dashboards for Grafana are available.

|

||||

Find them at [Grafana.com](https://grafana.com/dashboards?search=unifi-poller).

|

||||

|

||||

DESCRIPTION

|

||||

---

|

||||

UniFi Poller is a small Golang application that runs on Windows, macOS, Linux or

|

||||

Docker. It polls a UniFi controller every 30 seconds for measurements and stores

|

||||

the data in an Influx database. See the example configuration file for more

|

||||

examples and default configurations.

|

||||

|

||||

* See the example configuration file for more examples and default configurations.

|

||||

|

||||

OPTIONS

|

||||

---

|

||||

`unifi-poller [-c <config-file>] [-j <filter>] [-h] [-v]`

|

||||

|

||||

-c, --config <config-file>

|

||||

Provide a configuration file (instead of the default).

|

||||

|

||||

-v, --version

|

||||

Display version and exit.

|

||||

|

||||

-j, --dumpjson <filter>

|

||||

This is a debug option; use this when you are missing data in your graphs,

|

||||

and/or you want to inspect the raw data coming from the controller. The

|

||||

filter accepts three options: devices, clients, other. This will print a

|

||||

lot of information. Recommend piping it into a file and/or into jq for

|

||||

better visualization. This requires a valid config file that contains

|

||||

working authentication details for a UniFi Controller. This only dumps

|

||||

data for sites listed in the config file. The application exits after

|

||||

printing the JSON payload; it does not daemonize or report to InfluxDB

|

||||

with this option. The `other` option is special. This allows you request

|

||||

any api path. It must be enclosed in quotes with the word other. Example:

|

||||

unifi-poller -j "other /stat/admins"

|

||||

|

||||

-h, --help

|

||||

Display usage and exit.

|

||||

|

||||

CONFIGURATION

|

||||

---

|

||||

* Config File Default Location:

|

||||

* Linux: `/etc/unifi-poller/up.conf`

|

||||

* macOS: `/usr/local/etc/unifi-poller/up.conf`

|

||||

* Windows: `C:\ProgramData\unifi-poller\up.conf`

|

||||

* Config File Default Format: `TOML`

|

||||

* Possible formats: `XML`, `JSON`, `TOML`, `YAML`

|

||||

|

||||

The config file can be written in four different syntax formats. The application

|

||||

decides which one to use based on the file's name. If it contains `.xml` it will

|

||||

be parsed as XML. The same goes for `.json` and `.yaml`. If the filename contains

|

||||

none of these strings, then it is parsed as the default format, TOML. This option

|

||||

is provided so the application can be easily adapted to any environment.

|

||||

|

||||

`Config File Parameters`

|

||||

|

||||

Configuration file (up.conf) parameters are documented in the wiki.

|

||||

|

||||

* [https://github.com/davidnewhall/unifi-poller/wiki/Configuration](https://github.com/davidnewhall/unifi-poller/wiki/Configuration)

|

||||

|

||||

`Shell Environment Parameters`

|

||||

|

||||

This application can be fully configured using shell environment variables.

|

||||

Find documentation for this feature on the Docker Wiki page.

|

||||

|

||||

* [https://github.com/davidnewhall/unifi-poller/wiki/Docker](https://github.com/davidnewhall/unifi-poller/wiki/Docker)

|

||||

|

||||

GO DURATION

|

||||

---

|

||||

This application uses the Go Time Durations for a polling interval.

|

||||

The format is an integer followed by a time unit. You may append

|

||||

multiple time units to add them together. A few valid time units are:

|

||||

|

||||

ms (millisecond)

|

||||

s (second)

|

||||

m (minute)

|

||||

|

||||

Example Use: `35s`, `1m`, `1m30s`

|

||||

|

||||

AUTHOR

|

||||

---

|

||||

* Garrett Bjerkhoel (original code) ~ 2016

|

||||

* David Newhall II (rewritten) ~ 4/20/2018

|

||||

* David Newhall II (still going) ~ 6/7/2019

|

||||

|

||||

LOCATION

|

||||

---

|

||||

* UniFi Poller: [https://github.com/davidnewhall/unifi-poller](https://github.com/davidnewhall/unifi-poller)

|

||||

* UniFi Library: [https://github.com/golift/unifi](https://github.com/golift/unifi)

|

||||

* Grafana Dashboards: [https://grafana.com/dashboards?search=unifi-poller](https://grafana.com/dashboards?search=unifi-poller)

|

||||

|

|

@ -1,13 +0,0 @@

|

|||

# Examples

|

||||

|

||||

This folder contains example configuration files in four

|

||||

supported formats. You can use any format you want for

|

||||

the config file, just give it the appropriate suffix for

|

||||

the format. An XML file should end with `.xml`, a JSON

|

||||

file with `.json`, and YAML with `.yaml`. The default

|

||||

format is always TOML and may have any _other_ suffix.

|

||||

|

||||

#### Dashboards

|

||||

This folder used to contain Grafana Dashboards.

|

||||

**They are now located at [Grafana.com](https://grafana.com/dashboards?search=unifi-poller).**

|

||||

Also see [Grafana Dashboards](https://github.com/davidnewhall/unifi-poller/wiki/Grafana-Dashboards) Wiki.

|

||||

|

|

@ -1,104 +0,0 @@

|

|||

# UniFi Poller primary configuration file. TOML FORMAT #

|

||||

########################################################

|

||||

|

||||

[poller]

|

||||

# Turns on line numbers, microsecond logging, and a per-device log.

|

||||

# The default is false, but I personally leave this on at home (four devices).

|

||||

# This may be noisy if you have a lot of devices. It adds one line per device.

|

||||

debug = false

|

||||

|

||||

# Turns off per-interval logs. Only startup and error logs will be emitted.

|

||||

# Recommend enabling debug with this setting for better error logging.

|

||||

quiet = false

|

||||

|

||||

# Load dynamic plugins. Advanced use; only sample mysql plugin provided by default.

|

||||

plugins = []

|

||||

|

||||

#### OUTPUTS

|

||||

|

||||

# If you don't use an output, you can disable it.

|

||||

|

||||

[prometheus]

|

||||

disable = false

|

||||

# This controls on which ip and port /metrics is exported when mode is "prometheus".

|

||||

# This has no effect in other modes. Must contain a colon and port.

|

||||

http_listen = "0.0.0.0:9130"

|

||||

report_errors = false

|

||||

|

||||

[influxdb]

|

||||

disable = false

|

||||

# InfluxDB does not require auth by default, so the user/password are probably unimportant.

|

||||

url = "http://127.0.0.1:8086"

|

||||

user = "unifipoller"

|

||||

pass = "unifipoller"

|

||||

# Be sure to create this database.

|

||||

db = "unifi"

|

||||

# If your InfluxDB uses a valid SSL cert, set this to true.

|

||||

verify_ssl = false

|

||||

# The UniFi Controller only updates traffic stats about every 30 seconds.

|

||||

# Setting this to something lower may lead to "zeros" in your data.

|

||||

# If you're getting zeros now, set this to "1m"

|

||||

interval = "30s"

|

||||

|

||||

#### INPUTS

|

||||

|

||||

[unifi]

|

||||

# Setting this to true and providing default credentials allows you to skip

|

||||

# configuring controllers in this config file. Instead you configure them in

|

||||

# your prometheus.yml config. Prometheus then sends the controller URL to

|

||||

# unifi-poller when it performs the scrape. This is useful if you have many,

|

||||

# or changing controllers. Most people can leave this off. See wiki for more.

|

||||

dynamic = false

|

||||

|

||||

# The following section contains the default credentials/configuration for any

|

||||

# dynamic controller (see above section), or the primary controller if you do not

|

||||

# provide one and dynamic is disabled. In other words, you can just add your

|

||||

# controller here and delete the following section. Either works.

|

||||

[unifi.defaults]

|

||||

role = "https://127.0.0.1:8443"

|

||||

url = "https://127.0.0.1:8443"

|

||||

user = "unifipoller"

|

||||

pass = "unifipoller"

|

||||

sites = ["all"]

|

||||

save_ids = false

|

||||

save_dpi = false

|

||||

save_sites = true

|

||||

verify_ssl = false

|

||||

|

||||

# You may repeat the following section to poll additional controllers.

|

||||

[[unifi.controller]]

|

||||

# Friendly name used in dashboards. Uses URL if left empty; which is fine.

|

||||

# Avoid changing this later because it will live forever in your database.

|

||||

# Multiple controllers may share a role. This allows grouping during scrapes.

|

||||

role = ""

|

||||

|

||||

url = "https://127.0.0.1:8443"

|

||||

# Make a read-only user in the UniFi Admin Settings.

|

||||

user = "unifipoller"

|

||||

pass = "4BB9345C-2341-48D7-99F5-E01B583FF77F"

|

||||

|

||||

# If the controller has more than one site, specify which sites to poll here.

|

||||

# Set this to ["default"] to poll only the first site on the controller.

|

||||

# A setting of ["all"] will poll all sites; this works if you only have 1 site too.

|

||||

sites = ["all"]

|

||||

|

||||

# Enable collection of Intrusion Detection System Data (InfluxDB only).

|

||||

# Only useful if IDS or IPS are enabled on one of the sites.

|

||||

save_ids = false

|

||||

|

||||

# Enable collection of Deep Packet Inspection data. This data breaks down traffic

|

||||

# types for each client and site, it powers a dedicated DPI dashboard.

|

||||

# Enabling this adds roughly 150 data points per client. That's 6000 metrics for

|

||||

# 40 clients. This adds a little bit of poller run time per interval and causes

|

||||

# more API requests to your controller(s). Don't let these "cons" sway you:

|

||||

# it's cool data. Please provide feedback on your experience with this feature.

|

||||

save_dpi = false

|

||||

|

||||

# Enable collection of site data. This data powers the Network Sites dashboard.

|

||||

# It's not valuable to everyone and setting this to false will save resources.

|

||||

save_sites = true

|

||||

|

||||

# If your UniFi controller has a valid SSL certificate (like lets encrypt),

|

||||

# you can enable this option to validate it. Otherwise, any SSL certificate is

|

||||

# valid. If you don't know if you have a valid SSL cert, then you don't have one.

|

||||

verify_ssl = false

|

||||

|

|

@ -1,51 +0,0 @@

|

|||

{

|

||||

"poller": {

|

||||

"debug": false,

|

||||

"quiet": false,

|

||||

"plugins": []

|

||||

},

|

||||

|

||||

"prometheus": {

|

||||

"disable": false,

|

||||

"http_listen": "0.0.0.0:9130",

|

||||

"report_errors": false

|

||||

},

|

||||

|

||||

"influxdb": {

|

||||

"disable": false,

|

||||

"url": "http://127.0.0.1:8086",

|

||||

"user": "unifipoller",

|

||||

"pass": "unifipoller",

|

||||

"db": "unifi",

|

||||

"verify_ssl": false,

|

||||

"interval": "30s"

|

||||

},

|

||||

|

||||

"unifi": {

|

||||

"dynamic": false,

|

||||

"defaults": {

|

||||

"role": "https://127.0.0.1:8443",

|

||||

"user": "unifipoller",

|

||||

"pass": "unifipoller",

|

||||

"url": "https://127.0.0.1:8443",

|

||||

"sites": ["all"],

|

||||

"save_ids": false,

|

||||

"save_dpi": false,

|

||||

"save_sites": true,

|

||||

"verify_ssl": false

|

||||

},

|

||||

"controllers": [

|

||||

{

|

||||

"role": "",

|

||||

"user": "unifipoller",

|

||||

"pass": "unifipoller",

|

||||

"url": "https://127.0.0.1:8443",

|

||||

"sites": ["all"],

|

||||

"save_dpi": false,

|

||||

"save_ids": false,

|

||||

"save_sites": true,

|

||||

"verify_ssl": false

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

|

|

@ -1,52 +0,0 @@

|

|||

<?xml version="1.0" encoding="UTF-8"?>

|

||||

<!--

|

||||

#######################################################

|

||||

# UniFi Poller primary configuration file. XML FORMAT #

|

||||

# provided values are defaults. See up.conf.example! #

|

||||

#######################################################

|

||||

|

||||

<plugin> and <site> are lists of strings and may be repeated.

|

||||

-->

|

||||

<poller debug="false" quiet="false">

|

||||

<!-- plugin></plugin -->

|

||||

|

||||

<prometheus disable="false">

|

||||

<http_listen>0.0.0.0:9130</http_listen>

|

||||

<report_errors>false</report_errors>

|

||||

</prometheus>

|

||||

|

||||

<influxdb disable="false">

|

||||

<interval>30s</interval>

|

||||

<url>http://127.0.0.1:8086</url>

|

||||

<user>unifipoller</user>

|

||||

<pass>unifipoller</pass>

|

||||

<db>unifi</db>

|

||||

<verify_ssl>false</verify_ssl>

|

||||

</influxdb>

|

||||

|

||||

<unifi dynamic="false">

|

||||

<default role="https://127.0.0.1:8443">

|

||||

<site>all</site>

|

||||

<user>unifipoller</user>

|

||||

<pass>unifipoller</pass>

|

||||

<url>https://127.0.0.1:8443</url>

|

||||

<verify_ssl>false</verify_ssl>

|

||||

<save_ids>false</save_ids>

|

||||

<save_dpi>false</save_dpi>

|

||||

<save_sites>true</save_sites>

|

||||

</default>

|

||||

|

||||

<!-- Repeat this stanza to poll additional controllers. -->

|

||||

<controller role="">

|

||||

<site>all</site>

|

||||

<user>unifipoller</user>

|

||||

<pass>unifipoller</pass>

|

||||

<url>https://127.0.0.1:8443</url>

|

||||

<verify_ssl>false</verify_ssl>

|

||||

<save_ids>false</save_ids>

|

||||

<save_dpi>false</save_dpi>

|

||||

<save_sites>true</save_sites>

|

||||

</controller>

|

||||

|

||||

</unifi>

|

||||

</poller>

|

||||

|

|

@ -1,52 +0,0 @@

|

|||

########################################################

|

||||

# UniFi Poller primary configuration file. YAML FORMAT #

|

||||

# provided values are defaults. See up.conf.example! #

|

||||

########################################################

|

||||

---

|

||||

|

||||

poller:

|

||||

debug: false

|

||||

quiet: false

|

||||

plugins: []

|

||||

|

||||

prometheus:

|

||||

disable: false

|

||||

http_listen: "0.0.0.0:9130"

|

||||

report_errors: false

|

||||

|

||||

influxdb:

|

||||

disable: false

|

||||

interval: "30s"

|

||||

url: "http://127.0.0.1:8086"

|

||||

user: "unifipoller"

|

||||

pass: "unifipoller"

|

||||

db: "unifi"

|

||||

verify_ssl: false

|

||||

|

||||

unifi:

|

||||

dynamic: false

|

||||

defaults:

|

||||

role: "https://127.0.0.1:8443"

|

||||

user: "unifipoller"

|

||||

pass: "unifipoller"

|

||||

url: "https://127.0.0.1:8443"

|

||||

sites:

|

||||

- all

|

||||

verify_ssl: false

|

||||

save_ids: false

|

||||

save_dpi: false

|

||||

save_sites: true

|

||||

|

||||

|

||||

controllers:

|

||||

# Repeat the following stanza to poll more controllers.

|

||||

- role: ""

|

||||

user: "unifipoller"

|

||||

pass: "unifipoller"

|

||||

url: "https://127.0.0.1:8443"

|

||||

sites:

|

||||

- all

|

||||

verify_ssl: false

|

||||

save_ids: false

|

||||

save_dpi: false

|

||||

save_sites: true

|

||||

|

|

@ -1,13 +1,10 @@

|

|||

module github.com/davidnewhall/unifi-poller

|

||||

module github.com/unifi-poller/promunifi

|

||||

|

||||

go 1.13

|

||||

|

||||

require (

|

||||

github.com/BurntSushi/toml v0.3.1 // indirect

|

||||

github.com/influxdata/influxdb1-client v0.0.0-20191209144304-8bf82d3c094d

|

||||

github.com/prometheus/client_golang v1.3.0

|

||||

github.com/prometheus/common v0.7.0

|

||||

github.com/spf13/pflag v1.0.5

|

||||

golift.io/cnfg v0.0.5

|

||||

github.com/unifi-poller/poller v0.0.1

|

||||

golift.io/unifi v0.0.400

|

||||

)

|

||||

|

|

|

|||

|

|

@ -24,8 +24,6 @@ github.com/golang/protobuf v1.3.2 h1:6nsPYzhq5kReh6QImI3k5qWzO4PEbvbIW2cwSfR/6xs

|

|||

github.com/golang/protobuf v1.3.2/go.mod h1:6lQm79b+lXiMfvg/cZm0SGofjICqVBUtrP5yJMmIC1U=

|

||||

github.com/google/go-cmp v0.3.1/go.mod h1:8QqcDgzrUqlUb/G2PQTWiueGozuR1884gddMywk6iLU=

|

||||

github.com/google/gofuzz v1.0.0/go.mod h1:dBl0BpW6vV/+mYPU4Po3pmUjxk6FQPldtuIdl/M65Eg=

|

||||

github.com/influxdata/influxdb1-client v0.0.0-20191209144304-8bf82d3c094d h1:/WZQPMZNsjZ7IlCpsLGdQBINg5bxKQ1K1sh6awxLtkA=

|

||||

github.com/influxdata/influxdb1-client v0.0.0-20191209144304-8bf82d3c094d/go.mod h1:qj24IKcXYK6Iy9ceXlo3Tc+vtHo9lIhSX5JddghvEPo=

|

||||

github.com/json-iterator/go v1.1.6/go.mod h1:+SdeFBvtyEkXs7REEP0seUULqWtbJapLOCVDaaPEHmU=

|

||||

github.com/json-iterator/go v1.1.8/go.mod h1:KdQUCv79m/52Kvf8AW2vK1V8akMuk1QjK/uOdHXbAo4=

|

||||

github.com/julienschmidt/httprouter v1.2.0/go.mod h1:SYymIcj16QtmaHHD7aYtjjsJG7VTCxuUUipMqKk8s4w=

|

||||

|

|

@ -65,6 +63,8 @@ github.com/stretchr/objx v0.1.1/go.mod h1:HFkY916IF+rwdDfMAkV7OtwuqBVzrE8GR6GFx+

|

|||

github.com/stretchr/testify v1.2.2/go.mod h1:a8OnRcib4nhh0OaRAV+Yts87kKdq0PP7pXfy6kDkUVs=

|

||||

github.com/stretchr/testify v1.3.0/go.mod h1:M5WIy9Dh21IEIfnGCwXGc5bZfKNJtfHm1UVUgZn+9EI=

|

||||

github.com/stretchr/testify v1.4.0/go.mod h1:j7eGeouHqKxXV5pUuKE4zz7dFj8WfuZ+81PSLYec5m4=

|

||||

github.com/unifi-poller/poller v0.0.1 h1:/SIsahlUEVJ+v9+C94spjV58+MIqR5DucVZqOstj2vM=

|

||||

github.com/unifi-poller/poller v0.0.1/go.mod h1:sZfDL7wcVwenlkrm/92bsSuoKKUnjj0bwcSUCT+aA2s=

|

||||

golang.org/x/crypto v0.0.0-20180904163835-0709b304e793/go.mod h1:6SG95UA2DQfeDnfUPMdvaQW0Q7yPrPDi9nlGo2tz2b4=

|

||||

golang.org/x/crypto v0.0.0-20190308221718-c2843e01d9a2/go.mod h1:djNgcEr1/C05ACkg1iLfiJU5Ep61QUkGW8qpdssI0+w=

|

||||

golang.org/x/net v0.0.0-20181114220301-adae6a3d119a/go.mod h1:mL1N/T3taQHkDXs73rZJwtUhF3w3ftmwwsq0BUmARs4=

|

||||

|

|

|

|||

|

|

@ -1,51 +0,0 @@

|

|||

-----BEGIN PGP PUBLIC KEY BLOCK-----

|

||||

|

||||

mQINBF3ozJsBEADKOz87H0/nBgoiY/CXC2PKKFCvxxUEmuub+Xjs2IjvMmFjAXG/

|

||||

d4JP8ZUfuIL2snYZbaQ8IwsbHoElGEwTXeZeYwJKZpmOua1vd9xASf1NFzGnNlCk

|

||||

kdgi5CSiNQNphHRUYFVJWD+X+GjMfv2aEpt0FXSx2a95YS2Rqq4fSEfjT6xOgVXQ

|

||||

JUlusAZ4b22or9gLIYzFc0VCtSQthpgdlMIAitN7t2q+67v3TFyt0U3LO1jNnWGS

|

||||

FBM83gqCFT5ZITgH8jmVq9mn0odv/R2OTT5QEHBikP+WWjbKHqrFisFOQYza8qro

|

||||

Gn86SUAqGU0EQvMNk62YPnMD+AWEuDaZx53sJaSgzuEGG0lZYYrSdz0Dk+HIHrPd

|

||||

IsVn6s/BEHRFuZTLg0h90aSJB4TCK/HKux6hKcPKYySZcRDOxPJjQqUO37iPU2ak

|

||||

bDkOiuUrW0HcuV5/Sw6n5k8rDKub3l1wkg2Wfsgr8PHl0y5GtfA8kFBpmAQnBXwA

|

||||

mrfTz6CLf2WzYHfzxVvqOCy8Vo7yB9LpFLq27Z8eeY2wsRdQmUqRGLK7QvZEepQF

|

||||

QW3JUfseSW8dqpMPOOf0zN7P1UE/fp3wA7BDvTdu+IpMKV2SZvwkvhtCmoiI2dWo

|

||||

QvmgaKbxWL1NgLqc7xJWntxvTwKv4CLbu5DqHAn6NMOmO0lHuw08QNYl3wARAQAB

|

||||

tBhHbyBMaWZ0IDxjb2RlQGdvbGlmdC5pbz6JAk4EEwEIADgWIQS5PdZu+Y5U4urg

|

||||

JboBZq00q8WlfAUCXejMmwIbAwULCQgHAgYVCgkICwIEFgIDAQIeAQIXgAAKCRAB

|

||||

Zq00q8WlfN/CD/9Rb3WzQK5aFBmIYVxfOxsBeyMKf1zTRTnwM1Y7RqsT0m4NnlcT

|

||||

jAiE93H6+pibW5T9ujsL3wCGGf69sXo34lv112DJ5hDgR1TaYO5AQWpUKGqq5XNQ

|

||||

t+R8O50Xro34CTqrxrfCj5YD+zrZaDvr6F69JJSzUtO1MCx5j1Ujn5YF7IammSno

|

||||

nbufHKpv4jGeKSCtutPpOPrdR9JXdVG7Uo3XIiDn1z2Rv5MtC0uwiPCbAWhu4XbB

|

||||

su858TBcin5jgWJYjiab7IX7MNcZUgK6aCR/1qUG/rhXqjCz3Vm7XJ+hb5afAASR

|

||||

AJq8vqscmGgz0K4Ct9dI1OG0BhGs8mBUcRBVqLKAtc061SkM8oeive9JpCcVSyij

|

||||

6X+YVBESoFWxEO3ACNQ/mWGBIOOTT27Dabob5IOuBLSLJZdVB5tT9Py91JEd08Xi

|

||||

/O12+zpBcq6XUS/cUOiffDVmfByA3F8YmpgScvgdLxHc39fdaz4YtR7FbgSMJDux

|

||||

BXdT+GaSFXbYzQV0jUxkeesJr9/ZJPMVm+Q3mD91mTZ6yJ/mJbrsBhTTyx+gyd7O

|

||||

RusqAYSiTTjRdG6ZzPit8BGoX7s8TIq/dIxb5xnkXgVaaMORHjrpC2Ll9d4olsKs

|

||||

zyaXcSYZ+HohPI3JNU/Mr6bRnHDAOk7849ranOoWX+eHG+JyET4ko6wlObkCDQRd

|

||||

6MybARAA1QJ1onzGlXh1HHgMa3wy7WxK7jJ4anPnT+Nt2t4LvTFUq46LL2hgzmvK

|

||||

zJ5tFDrMUBCyybk1s/+hJow+bRBYIwQDkKuuBXq1LLSk2gheMDNaQJxr55EGeMVL

|

||||

drXuHQg6mFm2b6JgkEzu2srnIo9qaJMsj3i5O3ZfPgGVUda33r/66Izb3P9kN6xN

|

||||

wWvLtt+dcPYVxbX8X8d33p9KRw8yYYn0dEmj5rpXrm00oiSEuYj9Y/aPKHwbhrkj

|

||||

1yRdK9SawQBaTb8umaccpAK4tuhuzx5LOKzlO6D0ZydbCAkRbKshlO7bYVAkSkSI

|

||||

ldDIMQY0mG4P4A0s/qBjTtFleeg1roJkWDqchhuq6D+M1x4ZM3W4k1kyQPX6b9c6

|

||||

7v6n+2WPWtqOIahvRLb7zXkonH6TOv3Oopzoj16luSauXwXQhfcJ/8B+rpuEdsdJ

|

||||

mCsr9UyUHNC6/Dt+Sr82Tkqg74VkCkv00zXb85EYTuXx7AJeiCrNjEG4D8UQUGC7

|

||||

vyYwAPFAgvhNM/zA8yitflj45bpGcgrXoJ20NmLQLgJKJYuVODmJzn2ylcXQlhNf

|

||||

P1DwDfzUIeIX04Jg/qbnDseGrmp/jXq0oqQ8LujH+v8KZbBMminlmLIKJmO2TWiM

|

||||

WfKiNFCD5kQWlqtxZxlxuisRTqp9CrVxGeayxQ1uzX9NhMQjA+EAEQEAAYkCNgQY

|

||||

AQgAIBYhBLk91m75jlTi6uAlugFmrTSrxaV8BQJd6MybAhsMAAoJEAFmrTSrxaV8

|

||||

TswP/34pBQmyvyM5zxl7lRpHdptU+Zp9HskjeFGTgJZihRpRu/CzdFTSq2MXpaBW

|

||||

RLlkVEiOh8txX5bnA3DAFfTyKJ26Cc7WOIPXuGioX7rV5tqWHIQ3FO0QeGpwONli

|

||||

VGY9cGWMRfe5KfIxcUJY5ckI4c9leAnHjcuM0f/0W4xWg4pofK4zD6jvneUB8IA1

|

||||

KPHIuzO0EKCFaoedKkW5S3waVc8SaeYTk9R0Dl2tNbK9Q7pIPBt0bH7dwnTt7nCr

|

||||

tJgS7dpKjRo6xpSfN1j2P0E7bf5oT94wKM8ZTMSWqJtyNgYfDlAs5RUMkrAijdXb

|

||||